#include <AbstractApplication.h>

Public Member Functions | |

| AbstractApplication (int argc, char **argv) | |

| virtual | ~AbstractApplication () |

| virtual void | operator() (CParameterReader ¶mReader) |

| virtual void | dealer (int argc, char **argv, AbstractApplication *pApp)=0 |

| virtual void | farmer (int argc, char **argv, AbstractApplication *pApp)=0 |

| virtual void | outputter (int argc, char **argv, AbstractApplication *pApp)=0 |

| virtual void | worker (int argc, char **argv, AbstractApplication *pApp)=0 |

| MPI_Datatype & | messageHeaderType () |

| MPI_Datatype & | requestDataType () |

| MPI_Datatype & | parameterHeaderDataType () |

| MPI_Datatype & | parameterValueDataType () |

| MPI_Datatype & | parameterDefType () |

| MPI_Datatype & | variableDefType () |

| unsigned | numWorkers () |

| void | forwardPassThrough (const void *pData, size_t nBytes) |

| int | getRequest () |

| void | sendEofs () |

| void | sendEof () |

| void | requestData (size_t maxBytes) |

| void | throwMPIError (int status, const char *reason) |

| AbstractApplication (int argc, char **argv) | |

Protected Member Functions | |

| int | getArgc () const |

| char ** | getArgv () |

| void | makeDataTypes () |

Detailed Description

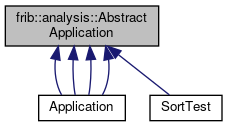

This class is a strategy pattern for the dealer/worker/farmer/outputter parallel execution pattern implemented in MPI. If an application has MPI rank n it will allocate them as follows:

- 0 - Dealer

- 1 - Farmer

- 2 - Outputter

- 3-n - Workers.

The dealer, rank 0 is connected to the data source. Workers send requests to it for work items the dealer respondes either with data or an end message indicating there is no more data. When the dealer has sent ends to all of the workers it will call MPI_Finalize to do our part to exit the application.

The Farmer gets messags from the workers and re-orders them into the original order. When the dealer sends a work item it will actually be sent as a pair of messages. The first message will contain a work item number and a payload size while the second message will contain a size of the following message (sent as an array of chars). The Farmer orders the work items by item number and sends them to the Outputtter. End messages from the workers are counted and when the farmer has gotten an end message from all workers it will send an end message to the outputter call MPI_Finalize to do its part to exit.

The outputter gets messages from the farmer that are either results or end marks. The outputter is connected to the data sink. It will write the messages from the farmer to the data sink. When it receives an end messages, it will call MPI_Finalize ot do its part to exit.

Workers are responsible for actually doing the application specific operations on the data. They request and receive blocks of data to operate on from the Dealer. They transform those blocks into output data which they then send to the Farmer for re-ordering. If the dealer sends them a worker and end message, it will send and end message to the farmer and call MPI_Finalize to do its part to exit.

Each process type is implemented as a pure virtual method. The function call operator():

- Calls MPI_INIT

- Uses the ParameterReader it was passed to read in the parameter configuration.

- If rank 0 figures out the extent of the program and if it is sufficient to run at least one worker.

- Depending on the rank, invokes the appropriate strategy methods to be the appropriate role.

- When the strategy method exists, invokes MPI_Finalize and returns to the caller who, presumably, exits.

- Note

- the operator() is also virtual to allow that logic to be overridden.

A typical use of this class woud be to:

*

* using namespace frib::analysis;

* int main(argc, char**argv) {

* // define a MyApplication deriveds, concrete class class from

* // AbstractApplication

*

* CTCLParameterReader configReader("configFile.tcl");

* MyApplication app(argc, argv);

* app(configReader);

*

* // When we get here our role has called MPI_Finalize

*

* exit(EXIT_SUCCESS);

* }

*

* Constructor & Destructor Documentation

◆ AbstractApplication()

| AbstractApplication::AbstractApplication | ( | int | argc, |

| char ** | argv | ||

| ) |

constructor

- Parameters

-

argc -number of command line arguments. argv - pointer to the command line arguments.

◆ ~AbstractApplication()

|

virtual |

destructor

Member Function Documentation

◆ forwardPassThrough()

| void AbstractApplication::forwardPassThrough | ( | const void * | pData, |

| size_t | nBytes | ||

| ) |

forwardPassThrough Send bytes without any real interpretation to the output

- Parameters

-

pData - data to send. nBytes - number of bytes to send.

◆ getArgc()

|

protected |

getArgc

- Returns

- int - number of command line parameters after MPI_Init is done modifying them.

///////////////////////////// Utility methods for the subclasses ////////

/**

getArgc

@return int - number of command line parameters after MPI_Init is

done modifying them.

◆ getArgv()

|

protected |

getArgv

- Returns

- const char** - pointer to list oif argument pointers.

◆ getRequest()

| int AbstractApplication::getRequest | ( | ) |

getRequest Receive a request from a worker and return the rank of the sender.

- Returns

- int - requesting worker.

◆ makeDataTypes()

|

protected |

makeDataTypes Creates any MPI custom data types we need. This sets member data datatypes. Getters exist to fetch references to the data types we've created.

◆ messageHeaderType()

| MPI_Datatype & AbstractApplication::messageHeaderType | ( | ) |

messageHeaderType Returns a reference to the MPI type item for a message header:

◆ numWorkers()

| unsigned AbstractApplication::numWorkers | ( | ) |

return the number of worker processes in the application. This is just size-3 (dealer, farmer, outputer).

◆ operator()()

|

virtual |

operator() Entry point to the MPI pattern.

- Parameters

-

paramReader - object that knows how to read the parameter file.

◆ parameterDefType()

| MPI_Datatype & AbstractApplication::parameterDefType | ( | ) |

parameterDefType

- Returns

- MPI_Datatype& - references data type for parameter definition.

◆ parameterHeaderDataType()

| MPI_Datatype & AbstractApplication::parameterHeaderDataType | ( | ) |

parameterHeaderDataType

- Returns

- MPI_DataType& reference to the header data type for a parameter message.

◆ parameterValueDataType()

| MPI_Datatype & AbstractApplication::parameterValueDataType | ( | ) |

parameterValueDataType

- Returns

- MPI_DataType& - reference to the data type for parameter values.

◆ requestData()

| void AbstractApplication::requestData | ( | size_t | maxBytes | ) |

requestData Send a request for data to the dealer

- Parameters

-

maxBytes - maxium payload we want to accept.

◆ requestDataType()

| MPI_Datatype & AbstractApplication::requestDataType | ( | ) |

requestDataType returns a reference to the MPI type item for a data request record.

◆ sendEof()

| void AbstractApplication::sendEof | ( | ) |

get a request and send an EOF to it.

◆ sendEofs()

| void AbstractApplication::sendEofs | ( | ) |

sendEofs Send all the EOFS to workers.

◆ throwMPIError()

| void AbstractApplication::throwMPIError | ( | int | status, |

| const char * | prefix | ||

| ) |

throwMPIError Analyzes an MPI call status return throwing a runtime error if the status is not normal

- Parameters

-

status - status from the MPI call. prefix - Prefix to the error text from status

◆ variableDefType()

| MPI_Datatype & AbstractApplication::variableDefType | ( | ) |

variableDefType

- Returns

- MPI_Datatype& - references the data type for variable values.

The documentation for this class was generated from the following files:

- base/AbstractApplication.h

- spectcl/workerTest.cpp

- AbstractApplication.cpp

1.8.13

1.8.13